SWY's technical notes

Relevant mostly to OS X admins

Alternate way to deal with XenServer storage issues

Posted by on June 8, 2013

I’ve administered a small pool of 2 physical XenServers with shared storage for my employer’s Windows and Linux virtual servers for a few years. One issue that has come up under both XenServer 5.6 and 6.x is a failure for XS to properly remove the disk files when a snapshot is deleted. This can be an issue when using a VM backup tool such as PHDVirtual, where the backup server is another VM, which on a schedule has the hypervisor

- Take a snapshot of another running VM

- Attach that snap to the backup VM

- Read that drive, back up to the configured storage

- detach and delete the snap

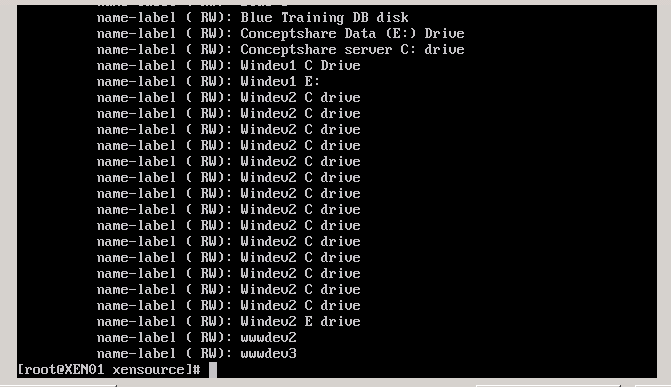

Result is a buildup of undeleted snapshot files, taking up storage space on your SR. One way to confirm if this is happening is to execute the following line on the XenServer console:

xe vdi-list is-a-snapshot=true | grep name-label | sort

If you don’t expect to see any snapshots, seeing them listed here is an issue.

These shouldn’t be here.

Citrix offers a command line tool to address this, outlined in Knowledgebase document CTX123400. This is called an Offline Coalesce, and is formatted as

xe host-call-plugin host-uuid=<UUID of the pool master Host> plugin=coalesce-leaf fn=leaf-coalesce args:vm_uuid=<uuid of the VM you want to coalesce>

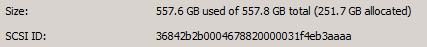

Earlier this week, I was using the above in hopes to address the large discrepancies in my SR used vs allocated values from the not deleted, but not seen in XenCenter snapshots seen above:

Problem is, it didn’t work. Tailing /var/log/SMlog, the standout clue was “no space to coalesce”. So great… a bug in snapshots takes up all your space, and when you try to use the tool to fix it, it can’t because there’s no space.

A day later, this became quite concerning. I don’t know what Xen will do when an SR hits 100% capacity, but I doubt I’ll enjoy it.

I decided to take a gamble and see what happens if I move a disk to a different SR. Lacking Storage XenMotion, I shut down a non-critical VM- the Windev2 machine listed above, detached the C: drive, and asked Xen to move it to a different SR. It took much longer than expected (about 30 minutes for 48 gigs), but in the end, I regained much more than 48 gigs of storage on my SR:

557.6- 281.9= 275 gigs of wasted snapshot space due to XenServer bugs, restored by moving the disk to a different SR. My nightly PHDVirtual backups were now able to take a snapshot last night and perform a proper backup.

Lessons learned:

- When leaf-coalesece fails, moving a disk to different storage can clean up the wasted storage space

- If I had to do it all over, I think I’d go VMWare. XenServer has had problems with snapshot management for a long time, and they still haven’t figured it out.

I had this same problem in my pool of 5 xenservers. Have not yet tested the leaf-coalesece method, but I’m glad you shared a different solution in case that doesn’t work for me.

My advice is to start with the leaf-colaesce first- and be patient. I’m now experiencing the same SR_BACKEND_FAILURE_44 because a different VM has a 17 long snapshot chain. I started the leaf-coalesce over 2 hours ago, and it’s still chugging along…. use

tail -f /var/log/SMlog

to monitor. I’m keeping my fingers crossed that entries including “Num combined blocks” and “SUCCESS” are in my interest, and that if I just sit tight, it will get the job done. I have successfully leaf-coalesced before, so it can work, but it seems to fail if there isn’t much space left on the SR.

A little more… the last leaf-coalesce I did ran for around 20 hours, and didn’t work. I have a ticket open with Citrix, who says their official advice is to export the VM, then import it again, but that my method of detach, move, attach *should* work, but sometimes won’t.

IF you have to perform an export and import, please note that this wil take much more time than moving virtual disks. Xensever cannot use more than 10% of the networkspeed of your management nic. If you have a 1 Gbit NIC, exporting AND importing will be processed at the stunning speed of only 10MBit/s!! Nice work Citrix!

I’ve not heard of the 10% limitation, so I’m not going to confirm or deny that. But I’ll presume it accurate, and then say that if there’s any way you can move between Storage Repositories vs Export/Import, that’s what you’ll want to do.